Meta and Google were found liable for intentionally designing social media platforms deemed addictive and harmful to teenagers, in a case that could open the door to more lawsuits.

Mothers, lawyers and supporters celebrate outside of the court after the jury found Meta and Google liable in a key test case accusing Meta and Google's YouTube of harming children's mental health through addictive social media platforms, in Los Angeles, California, US, on Mar 25, 2026. (Photo: Reuters/Mike Blake)

New: You can now listen to articles.

This audio is generated by an AI tool.

In the first ruling of its kind, Google and Meta were found liable for intentionally designing social media platforms deemed addictive and harmful to teenagers.

The verdict by a jury in Los Angeles could mark a turning point in the global backlash - and wave of lawsuits - alleging that social media platforms endanger the mental health of children.

Here’s what we know about the case and what it means for the tech giants.

THE TRIAL

Meta and Google were ordered on Wednesday (Mar 25) to pay a combined US$6 million in damages to plaintiff Kaley GM.

Kaley, a 20-year-old who was a minor when the case began, said she became addicted to Google’s YouTube and Meta’s Instagram at a young age because of their attention-grabbing design.

Jurors found the companies negligent in how they designed their platforms, and said they failed to adequately warn users about potential risks.

Meta and Google said they disagree with the verdict and plan to appeal.

In a separate case, a jury in New Mexico on Tuesday ordered Meta to pay US$375 million after finding that the company misled users about the safety of Facebook and Instagram while enabling child sexual exploitation on those platforms.

The lawsuit was brought by the state's attorney general.

Taken together, the rulings underscore a shift in how the courts - and the public - view the responsibilities of social media companies in protecting children.

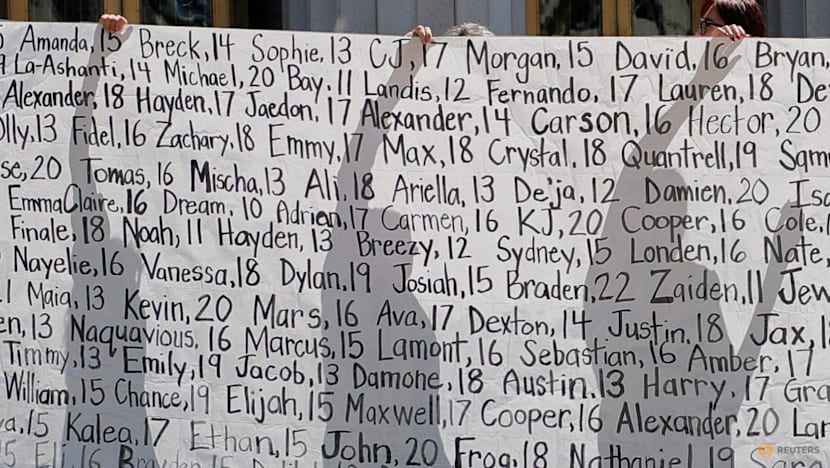

Parents who say they have lost their children due to social media hold up a banner with the names of the children outside the court after the jury found Meta and Google liable in a key test case accusing Meta and Google's YouTube of harming children's mental health through addictive social media platforms, in Los Angeles, California, US, on Mar 25, 2026. (Photo: Reuters/Mike Blake)

Parents who say they have lost their children due to social media hold up a banner with the names of the children outside the court after the jury found Meta and Google liable in a key test case accusing Meta and Google's YouTube of harming children's mental health through addictive social media platforms, in Los Angeles, California, US, on Mar 25, 2026. (Photo: Reuters/Mike Blake)

HOW THE RULING WAS DECIDED

In the Los Angeles case, Kaley’s lawyers argued that Meta and Google intentionally targeted kids through platform design, rather than content, and made decisions that prioritised profit over safety.

The lawyers' strategy made it harder for companies to hide behind legal provisions such as Section 230, which generally shields platforms from liability over user-generated content.

Jurors were shown internal documents revealing how Meta and Google sought to attract younger users, and heard testimony from executives, including Meta CEO Mark Zuckerberg.

One juror, who identified herself only as Victoria, said the panel focused heavily on what protections the platforms had in place to shield Kaley from harm, as well as on the long-term consequences for future young users.

"We looked at the history of everything that Kaley went through, and what was the process that these platforms had in place that was going to possibly prevent any harm," she said.

Collin Walke, partner and head of cybersecurity and data privacy practice at law firm Hall Estill, said the case’s focus on platform design rather than content mattered in the eventual ruling.

The content put on social media is not the responsibility of the companies, Walke explained.

“But what is their responsibility is the manner and method by which they design their algorithms in order to show you that content,” he said.

“And that is a unilateral choice that they make in the design of their products – and that is why they were found liable here.”

Lawyer Mark Lanier, of the plaintiff Kaley G.M., speaks with the media outside the court after the jury found Meta and Google liable in a key test case accusing Meta and Google's YouTube of harming children's mental health through addictive social media platforms, in Los Angeles, California, US, on Mar 25, 2026. (Photo: Reuters/Mike Blake)

Lawyer Mark Lanier, of the plaintiff Kaley G.M., speaks with the media outside the court after the jury found Meta and Google liable in a key test case accusing Meta and Google's YouTube of harming children's mental health through addictive social media platforms, in Los Angeles, California, US, on Mar 25, 2026. (Photo: Reuters/Mike Blake)

Zuckerberg’s testimony also ultimately sealed Meta’s fate, Victoria said, pointing to his inconsistent answers on the stand.

"Some of his testimony was not it - he changed it back and forth, and that didn't sit well with us," she said.

"He's the guru, so to speak, and he should have really, really known what he was going to say to us jurors before he even said anything."

The jury was also motivated by a desire to send a message, Victoria added. "We wanted them to feel it. We wanted them to realise that this was not acceptable."

Walke said the ruling has been a “long time coming”.

“It’s not as though any of the facts that came out at trial were particularly surprising to the general public,” he said.

“We've known for at least a decade now with tell-all books for former Google and Meta employees who come out and say ‘we're using casino-style techniques in our algorithms to try and keep children on our screens so that we can sell more products’,” Walke said.

“So it's not a particular surprise … I think the jury's verdict will ultimately be upheld."

Social media applications are displayed on a mobile phone on Dec 9, 2025. (File photo: Reuters/Hollie Adams)

Social media applications are displayed on a mobile phone on Dec 9, 2025. (File photo: Reuters/Hollie Adams)

WHY THIS TRIAL MATTERS

The verdicts in Los Angeles and New Mexico could serve as an early test case for how courts handle similar claims against social media companies.

Hundreds of lawsuits are already pending, with total liability potentially running into the billions of dollars.

Walke believes the verdict “very much so” sets a precedent for similar cases.

"This is a simple negligence case - did you design your product in a way to harm individuals?” he said.

“And now that that's been established and the facts that came out at this trial that other attorneys … will be getting their hands on it now. So you can assume that it's going to lead to more litigation and probably a lot more settlements."

Walke added: “I think this incentivises legislators to be on the side of the people and go ahead and figure out other ways to regulate tech companies and ensure that they don't do further harm to children or, quite frankly, society itself.”

CONSEQUENCES FOR META AND GOOGLE

The verdicts themselves do not mandate specific changes to the design of social media platforms, nor to the algorithms that make them tick.

However, a second phase of the New Mexico case - set for May and to be decided by a judge - could result in court-ordered changes to Meta's platforms.

A state district court judge will determine whether Meta created a public nuisance, and could impose restrictions and order the company to fund programmes aimed at mitigating potential harms to children.

New Mexico Attorney General Raúl Torrez, who filed the lawsuit against Meta in 2023, says his office wants improvements to Meta's enforcement of minimum age limits and removal of sexual predators, in part by lifting encryption on communication that can interfere with police work.

The penalty amounts are "a slap on the wrist for companies like Meta and YouTube, which are two of the biggest ad sellers in the world", said Jasmine Enberg of Scalable, who tracks the social media industry.

"But if these companies are forced to redesign their products, that poses an existential threat to their business models."